Ollama v0.19

Unlock faster performance and higher quality responses with Ollama's MLX integration, optimized for Apple Silicon devices.

Ollama v0.19

Unlock faster performance and higher quality responses with Ollama's MLX integration, optimized for Apple Silicon devices.

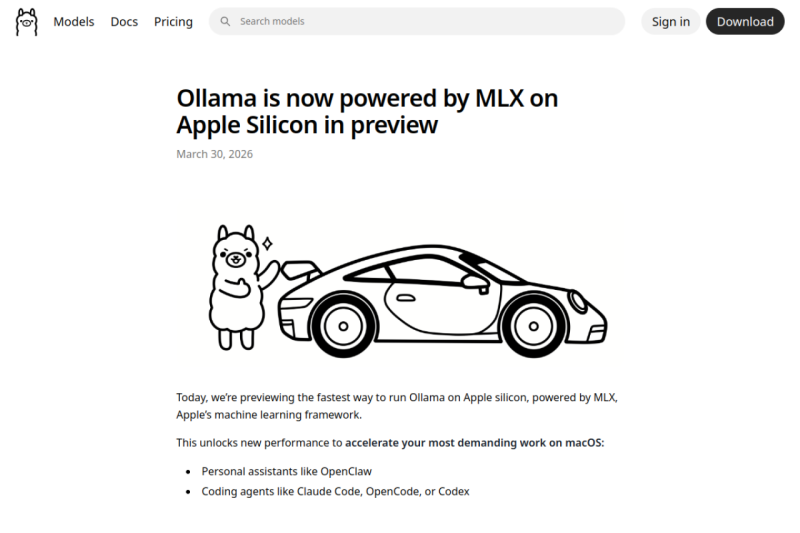

Unlock Unparalleled Performance: Ollama Now Powered by MLX on Apple Silicon

Ollama's integration with MLX on Apple Silicon revolutionizes the way you work with demanding applications, such as personal assistants and coding agents, by significantly accelerating performance and responsiveness.

Key Features

- Unmatched Speed: Experience the fastest performance on Apple Silicon, powered by MLX, for accelerated work on macOS, including personal assistants like OpenClaw and coding agents like Claude Code.

- Enhanced Model Accuracy: Leverage NVIDIA's NVFP4 format for higher quality responses and production parity, allowing for seamless sharing of results in production environments.

- Intelligent Caching: Enjoy improved caching for more responsiveness, with lower memory utilization and intelligent checkpoints for faster responses.

- Seamless Integration: Easily integrate Ollama with your existing workflows and tools, such as Claude Code and OpenClaw, for a streamlined experience.

- Future-Proof: Stay ahead with support for future models and architectures, ensuring you can adapt to the latest advancements in machine learning and AI.

Ideal For

- Developers and coders looking to accelerate their workflows with AI-powered tools like Claude Code and OpenClaw.

- Researchers and data scientists seeking to leverage the power of MLX and Apple Silicon for demanding projects.

- Businesses and organizations aiming to integrate AI and machine learning into their operations for enhanced efficiency and productivity.

Top Use Cases

- Accelerating coding tasks with Claude Code and OpenClaw for faster development and deployment.

- Enhancing personal assistant capabilities with OpenClaw for more efficient and responsive interactions.

- Streamlining research and data analysis with Ollama's accelerated performance and intelligent caching.

Known Alternatives

- Google's TensorFlow: Ollama offers a more streamlined and integrated experience, specifically optimized for Apple Silicon and MLX.

- Amazon's SageMaker: Ollama provides a more lightweight and adaptable solution, ideal for developers and researchers seeking a flexible AI platform.

Integrations & Ecosystem

- Seamless integration with popular tools like Claude Code, OpenClaw, and Zapier, as well as support for NVIDIA's NVFP4 format and Apple's MLX framework.

Pros & Cons

- Pros: Unmatched performance on Apple Silicon, enhanced model accuracy, and improved caching for responsiveness.

- Limitations: Requires a Mac with more than 32GB of unified memory, and may have limited support for certain architectures and models.

Frequently Asked Questions

- What is the system requirement for running Ollama on Apple Silicon?

- Ollama requires a Mac with more than 32GB of unified memory to ensure optimal performance.

- Can I use Ollama with my existing models and workflows?

- Yes, Ollama supports integration with popular tools like Claude Code and OpenClaw, and allows for easy import of custom models and workflows.

Submitted by

Pricing Model

FreemiumPricing Page

View PricingCompare with

Ollama v0.19 vs StyleList →A strong like-for-like option in AI, Open Source.

Ollama v0.19 vs Dyad →A strong like-for-like option in AI, Open Source.